Vision Pro Resources

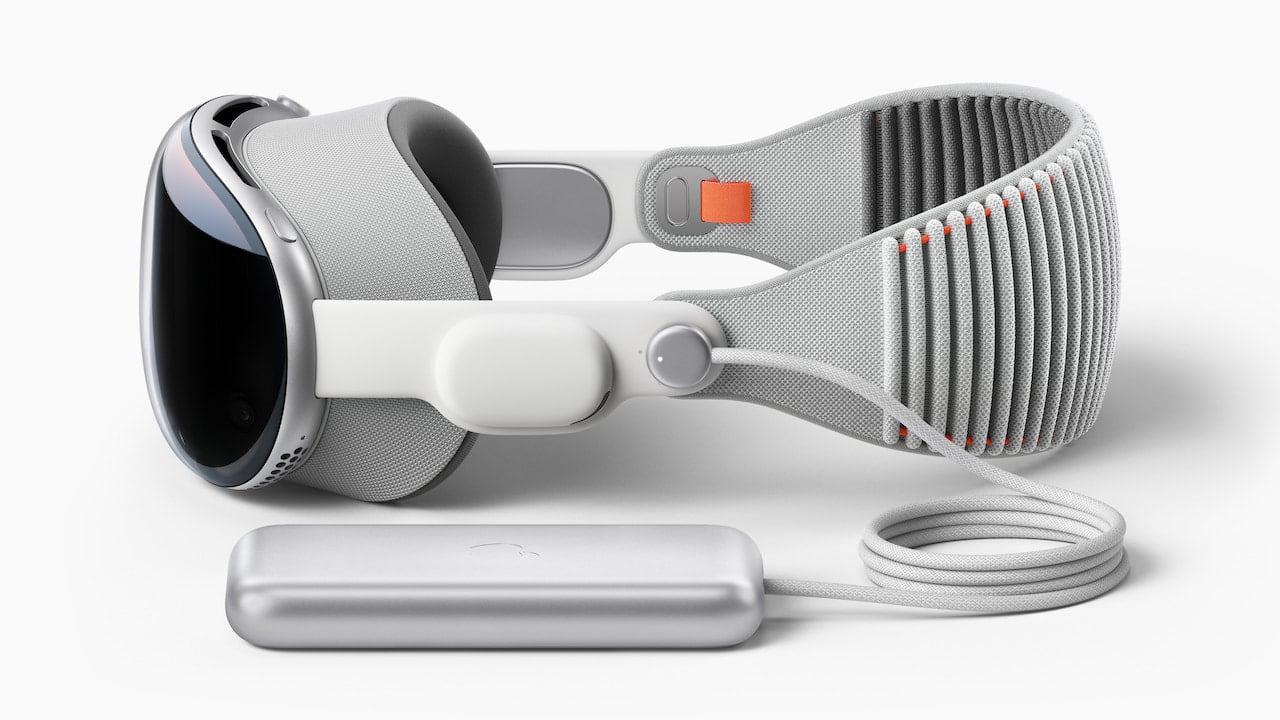

Apple announced Vision Pro during the

keynote address at WWDC 2023.

Below, I'm compiling the best resources and information I've found so far

about Vision Pro programming in particular and spatial computing resources

in general.

If you have any questions I might be able to answer or if you know of any

additional resources that should be included here, please drop me a line!

Speeds and Feeds

These are the technical details we know about so far.

| Release date

| Early 2024

|

| Price

| $3,499

|

| CPU

| Apple M2 for main compute

|

| Apple R1 for real-time processing

|

| Displays

| 4k per eye

|

| 6µ pixel size

|

| ~23M pixels total

|

| 90 Hz refresh rate, switchable to 96 Hz for 24 FPS content

|

| Sound

| Two speakers with spatial sound

|

| Sensors

| twelve cameras for head tracking, eye tracking, gesture recognition,

room-mapping, photo and video capture

|

| LiDAR for room-mapping as well as photo and video capture

|

| six microphones for voice input and audio capture

|

Articles and Reviews

These are a few of the better articles and reviews I've read so far.

They're from writers who are familiar with Apple and who have given me

good information in the past.

Let's see if we can learn something from them this time too!

I think

John Gruber's first impressions of Vision Pro and visionOS is the best

article I've read so far set mostly from a consumer's or user's point of

view.

Andy Matuschak has

some great insights from a user interface and user experience point

of view.

Naturally, Ben Thompson

has a lot to say about Apple Vision as well.

David Smith gave

his impressions of Vision Pro after receiving a demo at WWDC 2023.

I would say he seems excited and enthusiastic about the platform!

WWDC Videos

Most of what we know about programming for Vision Pro comes from the WWDC

2023 session videos.

However, Apple has been working on spatial computing and augmented reality

for a long time.

There are also older WWDC session videos about ARKit and Reality Composer

which I'll try to dig up and add here.

Eventually, I hope to have links to all of the relevant session videos

here and organize them in some sensible way.

But I'm going to start by just linking to the ones I've seen so far, thought

were good, and had something to say about so I can focus on the ones where

I have something to add beyond just what's on Apple's developer site.

Monday

Monday kicked off with some big announcements!

There were rumors that it would be the longest keynote ever.

I don't know if it turned out that way, but it certainly felt like a lot!

-

Keynote

-

Tim Cook begins his presentation of Vision Pro, visionOS, and spatial

computing with his announcement of "One More Thing!" at about the one

hour and twenty-one minute mark.

Apple reveals many conceptual and technical details in the keynote

announcement, but also leaves plenty of open questions.

-

I was perhaps surprised most by what wasn't shown: very little

gaming or "traditional" immersive experiences.

Apple seems to think they've figured out what mixed-reality (or "spatial

computing" as they call it) is good for and it's not what I had expected.

-

Platforms State of the Union

-

More details about the new visionOS platform begin almost exactly one hour

into the Platforms State of the Union.

Here we start to get a better idea how the platform will look from a user's

perspective (if you'll pardon the pun) and what tools we'll have as

developers to build great experiences.

-

If you're already up-to-speed with the basics of the keynote announcement

from hearing about it in the press, this is probably a good place to start

from to see which sessions or documentation you'd like to take a deeper

dive into.

Tuesday

Tuesday's sessions were jam-packed with information about visionOS.

I can't think of the last time I saw a day of WWDC so focused on one platform.

Maybe when the iOS SDK launched?

-

Build spatial experiences with RealityKit

-

This session is where I chose to start.

It talks about using RealityKit (which I knew a little about but hadn't

used before) to bring 3D models into your visionOS app.

RealityKit uses an entity-component-system model to bring interactivity

and animation to static 3D models.

If it were just for games, it would be a game engine I guess.

-

This session talks a little bit about the difference between windows, volumes,

and spaces but I think "Get started with building apps for spatial computing"

is the better session for getting an overview of these basics.

-

Design for spatial input

-

This session is a deeper dive in spatial input principles and the standard

input methods and gestures as well as design tips for making interfaces

that are easy to interact with using these new input methods.

-

This is probably a good session for anyone who will be developing for the

platform.

-

Design for spatial user interfaces

-

This session dives deeper yet into using familiar (and some new) user

interface elements for user input in a spatial environment.

-

I think this is probably a good session for most people who will

develop for the platform, but its focus is definitely on "productivity"

style user interface components and not on games or immersive experiences.

-

Get started with building apps for spatial computing

-

This session breaks down the details and differences of windows, volumes,

and spaces.

It's the place to start for figuring out which of these basic user interface...

containers? paradigms? you'll want to use in your application.

-

Meet Reality Composer Pro

-

If you're going to use RealityKit, you probably won't want to set up your

scenes all in code.

Reality Composer Pro is like Interface Builder for RealityKit: you can

compose scenese, preview them, build shaders, and more.

I think this session is a must-watch if you think you might use RealityKit.

-

Meet SwiftUI for spatial computing

-

I haven't used SwiftUI yet, but I'm getting the impression that I will if

I want to do anything with spatial computing.

It looks like this is going to be Apple's preferred (and in some cases

only?) way to build user interfaces for visionOS.

If you've already been using SwiftUI, you've probably got a leg up!

-

Principles of spatial design

-

Now that we've seen some cool stuff and have an idea what visionOS and

spatial computing are about, this session brings us back around to

fundamental principles of good design in the spatial environment.

-

I came away with the impression that Apple has been working on this for

a long time and has done their homework here.

It may be that these ideas and best-practices evolve some over time, but

I think they'll prove as foundational as Apple's (and Xerox's and Microsoft's)

early research on graphical user interfaces.

Wednesday

-

Enhance your spatial computing app with RealityKit

-

This session is a grab-bag of deeper detail on some RealityKit topics like:

integrating regular SwiftUI (or UIKit) with RealityView via attachments,

anchoring a RealityView to real-world surface (like a wall or table-top),

using particle effects and video in a RealityView, and using portals

to connect a virtual world with the real world.

-

Reading between the lines, I think this session exposes some indications

of the types of experiences Apple thinks work well and that developers will

want to create on the platform.

-

Explore immersive sound design

-

It's said that sound is the most important part of video and my own

experience seems to confirm this.

I hadn't yet given much thought to how that principle would play out in

spatial computing, but this session really highlighted to me the importance

of sound in making an immersive and usable experience.

-

Aside from pointing out the importance of sound, it also enumerates

a variety of important considerations in designing appropriate

sound for your experience.

This was definitely one of the most eye- (ear-?) opening sessions for me.

-

Explore materials in Reality Composer Pro

-

As a developer who's fairly new to 3D, this session really got me excited

to play around with Reality Composer Pro.

The promise of being able to experiment with shaders in real-time using

a node-based visual editor is very appealing to me — I wouldn't have thought

that building custom shaders would make sense for the sort of projects

I imagine doing, but this might make it a real possibility!

-

The demo definitely reminded me of the old Quartz Composer, which was also

a very cool tool.

-

Meet Object Capture for iOS

-

I guess this session is a little off the expected path for visionOS

development.

I think an interest in spatial computing will create a new demand for 3D

assets — particularly models — and that this technology might be one way

to help satisfy that demand.

-

I think Apple has mostly pitched this as a way to get real-world objects

into AR on iOS (and now perhaps the Shared Space on visionOS) for applications

like online shopping.

However, I couldn't help but think of the applications it might have for

artists who are used to working in traditional materials like clay: perhaps

it will act as a bridge for them to bring their creations to spatial

computing experiences.

-

Take SwiftUI to the next dimension

-

Apple seems excited about SwiftUI and I'm sure they wouldn't

want it to be left behind for spatial computing.

This session covers using SwiftUI for bringing basic 3D models or full

RealityView into a SwiftUI project as well as bringing SwiftUI views into

a RealityView as attachments.

It also covers recognizing new spatial hand gestures in SwiftUI.

-

Work with Reality Composer Pro content in Xcode

-

This session picks up where “Meet Reality Composer Pro” and “Explore

materials in Reality Composer Pro” leave off: it covers how to load scenes

you make in Reality Composer Pro into a RealityView, connect attachments

to entities defined in Reality Composer Pro, play audio assets

from your Reality Composer Pro scene, and wire up user inputs to

custom shaders.

Official Documentation

Apple has a hub for visionOS

development that they've been adding to. It started with some basic

information about the platform (most of which was shown in the keynote).

They've since added links to developer documentation and

a beta version

of Xcode with support for the visionOS platform.

The developer documentation includes an

API reference

and sample code which — I'm happy to report — also includes some

guide-style documents to help get you oriented on the platform.

Also provided are

Human Interface Guidelines updates for visionOS.

I'll plan to update and organize this section as more documentation becomes

available.

I'd like to also provide some summaries and walkthroughs for common tasks

as time allows and as I digest the documentation myself.

Please drop me a note with your topic suggestions!